Tech behemoth OpenAI has touted its AI-powered transcription tool Whisper as having “human-level robustness and accuracy.”

But Whisper has one major flaw: It’s prone to creating chunks of text or even entire sentences, according to interviews with more than a dozen software engineers, developers and academic researchers. Those experts said some of the made-up lyrics – known in the industry as hallucinations – could include racial slurs, violent rhetoric and even imagined medical treatments.

Experts said such fictions are problematic because Whisper is being used in a host of industries around the world to translate and transcribe interviews, generate text in popular consumer technologies and create captions for videos.

More troubling, they said, is a rush by medical centers to use Whisper-based tools to transcribe patient consultations with doctors, despite OpenAI’s warnings that the tool should not be used in “high-risk areas.”

The full extent of the problem is hard to discern, but researchers and engineers said they often encounter Whisper’s hallucinations in their work. A University of Michigan researcher conducting a study of public meetings, for example, said he found hallucinations in 8 out of every 10 audio transcriptions he inspected before he began trying to improve the model.

A machine learning engineer said he initially detected hallucinations in about half of the 100-plus hours of Whisper transcripts he analyzed. A third developer said he found hallucinations in nearly every one of the 26,000 transcripts he created with Whisper.

The problems persist even on short, well-recorded audio samples. A recent study by computer scientists found 187 hallucinations in more than 13,000 clear audio fragments they examined.

This trend would lead to tens of thousands of erroneous transcriptions over millions of records, the researchers said.

Such mistakes can have “really serious consequences,” especially in hospital settings, said Alondra Nelson, who led the White House Office of Science and Technology Policy for the Biden administration until last year.

“No one wants a misdiagnosis,” said Nelson, a professor at the Institute for Advanced Study in Princeton, New Jersey. “There has to be a higher bar.”

Whisper is also used to create closed captions for the deaf and hard of hearing – a population at particular risk for erroneous transcriptions.

That’s because deaf and hard-of-hearing people have no way of identifying fiction is “hidden among all this other text,” said Christian Vogler, who is deaf and directs Gallaudet University’s Technology Access Program.

OpenAI sought to address the problem

The proliferation of such hallucinations has led experts, advocates and former OpenAI employees to call for the federal government to consider AI regulations. At the very least, they said, OpenAI should address the flaw.

“This seems solvable if the company is willing to prioritize it,” said William Saunders, a San Francisco-based research engineer who left OpenAI in February over concerns with the company’s direction. “It’s problematic if you set this up and people are sure what it can do and integrate it into all these other systems.”

An OpenAI spokesperson said the company is constantly studying how to reduce hallucinations and praised the researchers’ findings, adding that OpenAI incorporates feedback into model updates.

While most developers assume transcription tools misspell words or make other mistakes, engineers and researchers said they’ve never seen another AI-powered transcription tool hallucinate as much as Whisper.

Whisper hallucinations

The tool is integrated into several versions of OpenAI’s flagship chatbot, ChatGPT, and is an integrated offering on Oracle and Microsoft’s cloud computing platforms, which serve thousands of companies worldwide. It is also used to transcribe and translate text in many languages.

AP

In the last month alone, a recent version of Whisper was downloaded over 4.2 million times by the open source artificial intelligence platform HuggingFace. Sanchit Gandhi, a machine learning engineer there, said Whisper is the most popular open-source speech recognition model and is integrated into everything from call centers to voice assistants.

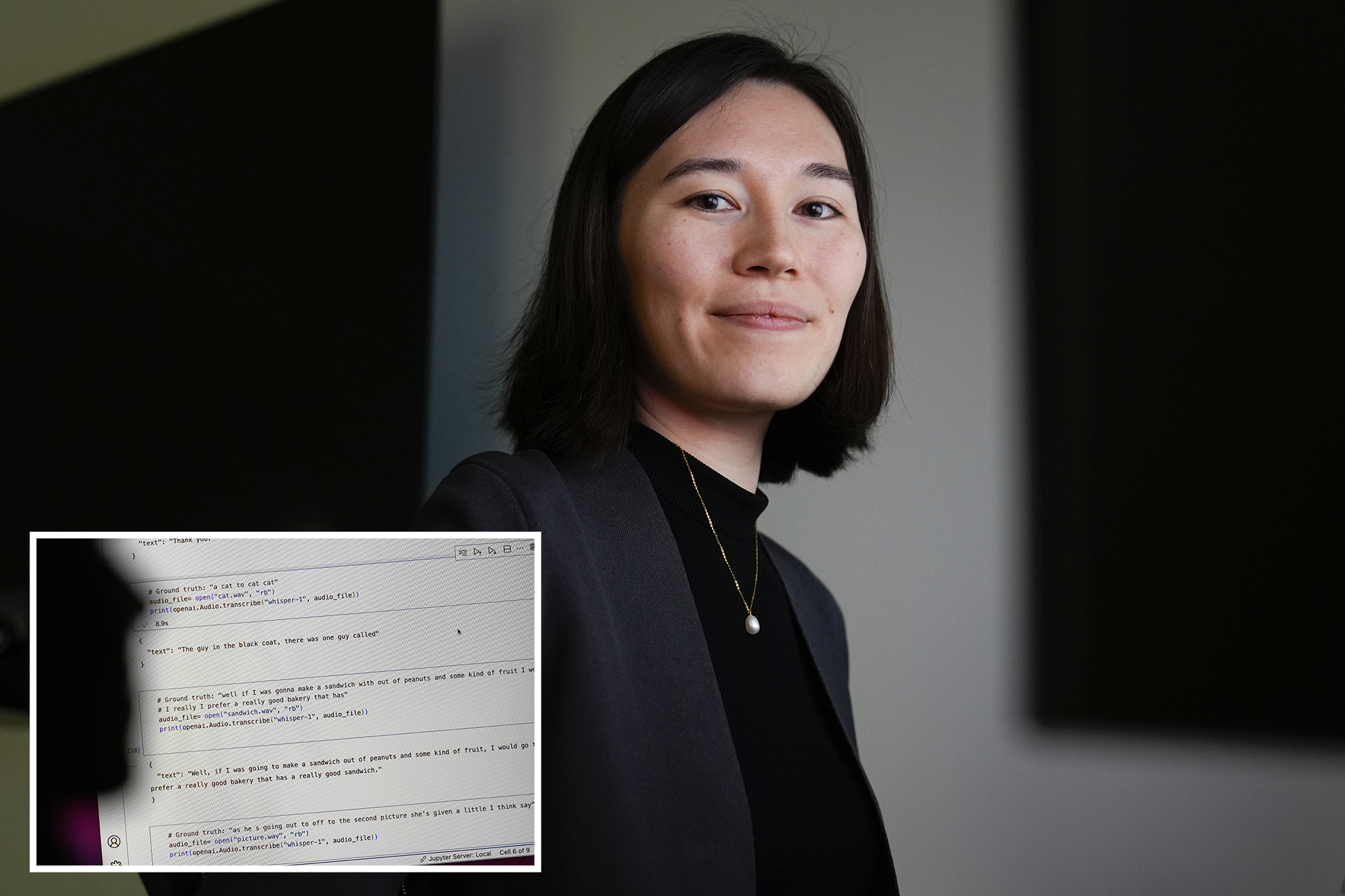

Professors Allison Koenecke of Cornell University and Mona Sloane of the University of Virginia examined thousands of short excerpts they obtained from TalkBank, a research repository hosted at Carnegie Mellon University. They determined that nearly 40% of hallucinations were harmful or distressing because the speaker could be misinterpreted or misinterpreted.

In one example they discovered, a speaker said: “He, the boy, was going to take the umbrella, I’m not sure exactly.”

But the transcription software added: “He took a large part of a cross, a small, small part … I’m sure he didn’t have a terror knife, so he killed a number of people.”

A speaker on another recording described “two more girls and a lady”. Whisper made up additional comments on race, adding “two other girls and a lady, um, who were black.”

In a third transcript, Whisper invented a non-existent drug called “hyperactivated antibiotics”.

Researchers aren’t sure why Whisper and similar tools hallucinate, but software developers said the hallucinations tend to occur between pauses, background sounds or music playing.

OpenAI recommended in its online findings against using Whisper in “decision-making contexts, where flaws in accuracy can lead to pronounced flaws in results.”

Transcription of appointments with the doctor

That warning hasn’t stopped hospitals or medical centers from using speech-to-text models, including Whisper, to transcribe what’s said during doctor visits to free up medical providers to spend less time taking notes or written reports.

Over 30,000 clinicians and 40 health systems, including the Mankato Clinic in Minnesota and Children’s Hospital in Los Angeles, have begun using a Whisper-based tool built by Nabla, which has offices in France and the US.

That tool was well-suited to medical language to transcribe and summarize patient interactions, said Nabla’s chief technology officer, Martin Raison.

Company officials said they are aware that Whisper can hallucinate and are mitigating the problem.

It’s impossible to compare Nabla’s AI-generated transcript to the original recording because Nabla’s tool deletes the original audio for “data security reasons,” Raison said.

Nabla said the tool has been used to transcribe about 7 million medical visits.

Saunders, the former OpenAI engineer, said the deletion of the original audio can be worrisome if transcripts aren’t double-checked or clinicians can’t access the recording to verify they’re accurate.

“You can’t catch mistakes if you take the truth out of the ground,” he said.

Nabla said no model is perfect and that their model currently requires medical providers to quickly edit and approve transcribed notes, but that could change.

Privacy concerns

Because patients’ appointments with their doctors are confidential, it’s hard to know how the AI-generated transcripts are affecting them.

AP

A California state lawmaker, Rebecca Bauer-Kahan, said she took one of her children to the doctor earlier this year and refused to sign a form from the health network that required her permission to share audio of consulting with vendors that included Microsoft Azure. the cloud computing system run by OpenAI’s largest investor. Bauer-Kahan didn’t want such intimate medical conversations shared with tech companies, she said.

“The release was very specific that for-profit companies would be eligible to have this,” said Bauer-Kahan, a Democrat who represents a swath of San Francisco suburbs in the state Assembly. “I was like ‘absolutely not.'”

John Muir Health spokesman Ben Drew said the health system complies with state and federal privacy laws.

#Researchers #AIpowered #transcription #tool #hospitals #invents

Image Source : nypost.com